Machine Learning: Measuring Similarity and Distance

Measuring similarity or distance between two data points is fundamental to many Machine Learning algorithms such as K-Nearest-Neighbor, Clustering ... etc.

Join the DZone community and get the full member experience.

Join For FreeCurator's Note: If you like the post below, feel free to check out the Machine Learning Refcard, authored by Ricky Ho!

Measuring similarity or distance between two data points is fundamental to many Machine Learning algorithms such as K-Nearest-Neighbor, Clustering ... etc. Depending on the nature of the data point, various measurements can be used.

Distance between numeric data points

When the dimension of a data point is numeric, the general form is called the Minkowski distance, as shown here:

When p = 2, this is equivalent to Euclidean distance. When p = 1, this is equivalent to Manhattan distance.

This measure is independent of the underlying data distribution. But what if the value along the x-dimension is much bigger than the value along the y-dimension? We need to bring all of them down to scale first. A common way is to perform a z-transform where each data point first subtracts the mean value, then divides the standard deviation.

This measure, although taking into consideration the distribution of each dimension, assumes the dimensions are independent of each other. But what if the x-dimension has some correlation with the y-dimension? To consider correlations between different dimensions, we use this:

If we care about the direction of the data rather than the magnitude, then using the cosine distance is a common approach. It computes the dot product of the two data points divided by the product of their magnitude. Cosine distance, together with the term/document matrix, is commonly used to measure the similarity between documents.

Distance between categorical data points

Since we have no ordering between categorical values, we can only measure whether the categorical values are the same or not. Basically we are measuring the degree that attribute values overlap. Hamming distance can be used to measure how many attributes must be changed in order to match one another. We can calculate the ratio to determine the similarity (or difference) between two data points using the simple matching coefficient:

noOfMatchAttributes / noOfAttributes

However, in some cases, equality of certain values don't mean anything. For example, let's say the data point represents a user with attributes representing each movie. The data point contains a high dimensional binary value indicating that the user has or has not seen the movie (1 represent yes and 0 represent no). Given that most users only see a small portion of all movies, if both users haven't seen a particular movie (a value of 0 for both), it doesn't indicate a similarity between the users. On the other hand, if both users saw the same movie (a value of 1 for each), it is implied that the users have many similarities. In this case, equality of 1 should carry a much higher weight than equality of 0. This leads to the Jaccard similarity, as seen here:

noOfOnesInBoth / (noOfOnesInA + noOfOnesInB - noOfOnesInAandB)

Whether or not values are matching, though, if the category is structured as a Tree hierarchy, then the distance between the two categories can be quantified by the path length of their common parent. For example, "/product/spot/ballgame/basketball" is closer to "/product/spot/ballgame/soccer/shoes" than "/product/luxury/handbags" because the common parent has a longer path.

Distance between mixed categorical and numeric data points

When the data point contains a mixture of numeric and categorical attributes, we can calculate the distance of each group and then treat each measure of distance as a separate dimension (numeric value).

Distance between sequence (String, TimeSeries)

In case each attribute represents an element of a sequence, we need a different way to measure the distance. For example, let's say each data point is a string (which contains a sequence of characters) — then edit distance is a common measuring tool. Basically, edit distance reveals how many "modifications" (which can be insert, modify, delete) are needed to change stringA into stringB. This is usually calculated by using thedynamic programming technique.

Time Series is another example of sequence data. Similar to the concept of edit distance, Dynamic Time Warp distorts the time dimension by adding more data points in both time series, minimizing the square error between corresponding pairs. We discover where to add these data points by using a similar dynamic programming technique. Here is a very good paper that describe the details.

Distance between nodes in a network

In a homogenous undirected graph (nodes are of the same type), distance between nodes can be measured by the shortest path.

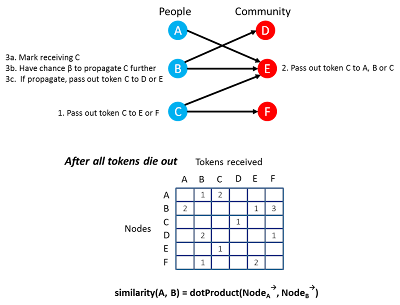

In a bi-partite graph, there are two types of nodes in which each node only connects to the other type. (e.g., People joining communities.) Node similarity can be measured by analyzing how similar their connected communities are as long as the nodes are the same type.

SimRank is an iterative algorithm that computes the similarity of nodes. It does this by adding up the similarities between all node pairs of different types. Other types of node similarities are computed in the same way.

We can also use a probabilistic approach, such as RandomWalk, to determine the similarity. Each 'people node' will pass a token (label with the people's name) along a randomly picked, connected 'community node' (weighted by the strength of connectivity). Each community node will propagate the received token back to a randomly picked people node. Now the people who received the propagated token may drop the token (with a chance beta) or propagate it to a randomly chosen community again. This process continues until all the tokens are gone (since they have a chance of being dropped). After that, we obtain the trace Matrix and compute the similarity based on the dot product of the tokens it receives.

Distance between population distribution

Instead of measuring distance between individual data points, we can also compare a collection of data points (e.g., populations), and measure the distance between them. In fact, one important part of statistics is to measure the distance between two groups of samples to see if the "difference" is significant enough to conclude they are from different populations.

Let's say the population contains members that belong to different categories and we want to measure if population A and population B have the same or different proportions of members across such categories. We can use Chi-Square or KL-Divergence to measure their distance of separation.

In case every member of the population has two different numeric attributes (e.g. weight and height), and we want to infer one attribute from the other, given that they correlate, the correlation coefficient quantifies their degree of correlation. And it does not matter whether these two attributes are moving along the same direction (heavier people are taller), in a different direction (heavier people are shorter), or independently of one another. The correlation coefficient ranges from -1 (negatively correlated) to 0 (no correlation) to 1 (positively correlated).

If the two attributes are categorical (rather than numeric), then mutual information is a common way to measure their dependencies. This determines whether knowing the value of one attribute will help infer the value of the other.

Suppose two judges rank a collection of items and we are interested in how much their ranking orders agree. We can use Spearman's rank coefficient to measure their degree of consensus in the ranking order.

Published at DZone with permission of Ricky Ho, DZone MVB. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments